Vendor lock-in

Hardware and software are tightly coupled, making it difficult to introduce multi-vendor solutions or migrate without costly upgrades or replacements.

Disaggregated white-box switching, production-grade SONiC, and automation-first operations — designed for teams who want cloud-like velocity without giving up control.

Open Networking is fundamentally about disaggregation-separating network hardware from the network operating system (NOS). Instead of being locked into a single vendor’s vertically integrated stack, you gain the ability to mix and match white-box switches with different NOS options, much like choosing between operating systems on a server. This shifts network design from proprietary, vendor-driven models to standards-based, programmable infrastructure, where Linux-based tooling, open protocols, and automation frameworks become first-class citizens. The result is a more cloud-like operating model for networks-highly scalable, API-driven, and aligned with DevOps practices.

Within this model, SONiC (Software for Open Networking in the Cloud) represents a practical, production-grade implementation of Open Networking principles. Originally developed by Microsoft for Azure, SONiC is a Linux-based NOS built around containerized services and modular components (e.g., routing via FRR). For architects, this means fine-grained control, extensibility, and vendor neutrality, enabling consistent operations across heterogeneous hardware. Strategically, adopting Open Networking with SONiC improves cost efficiency (via commodity hardware), reduces vendor lock-in, and accelerates innovation,but it also shifts responsibility toward in-house expertise in automation, lifecycle management, and integration.

These are the friction points we hear from enterprises before they move to disaggregated SONiC.

Hardware and software are tightly coupled, making it difficult to introduce multi-vendor solutions or migrate without costly upgrades or replacements.

Proprietary CLIs and weak API support restrict automation, slowing down integration with modern DevOps and orchestration tools.

Manual configurations, inconsistent workflows, and device-by-device management increase the risk of errors and operational overhead.

Hidden costs in licensing, support, and vendor-specific components (like optics) make budgeting and cost optimization challenging.

Proprietary architectures limit the ability to scale efficiently or adopt new technologies like SDN, telemetry, and cloud-native networking.

PalC’s open networking practice covers the full stack — NOS customisation, multi-vendor integration, automation, and long-term production operations.

Production-oriented SONiC rollouts on white-box platforms — feature scoping, validation, and cutover planning aligned to your fabric design (not generic “community defaults”).

Ansible-first delivery, GitOps-friendly workflows, and repeatable pipelines so changes are auditable — from day-0 provisioning to ongoing policy rollout.

Streaming telemetry and observability wired into how you operate — from baseline health checks to integration with your monitoring stack.

For exact deployment requirements, we stand up a lab environment that mirrors your target use case — so validation happens against your topology, protocols, and traffic assumptions before anything touches production.

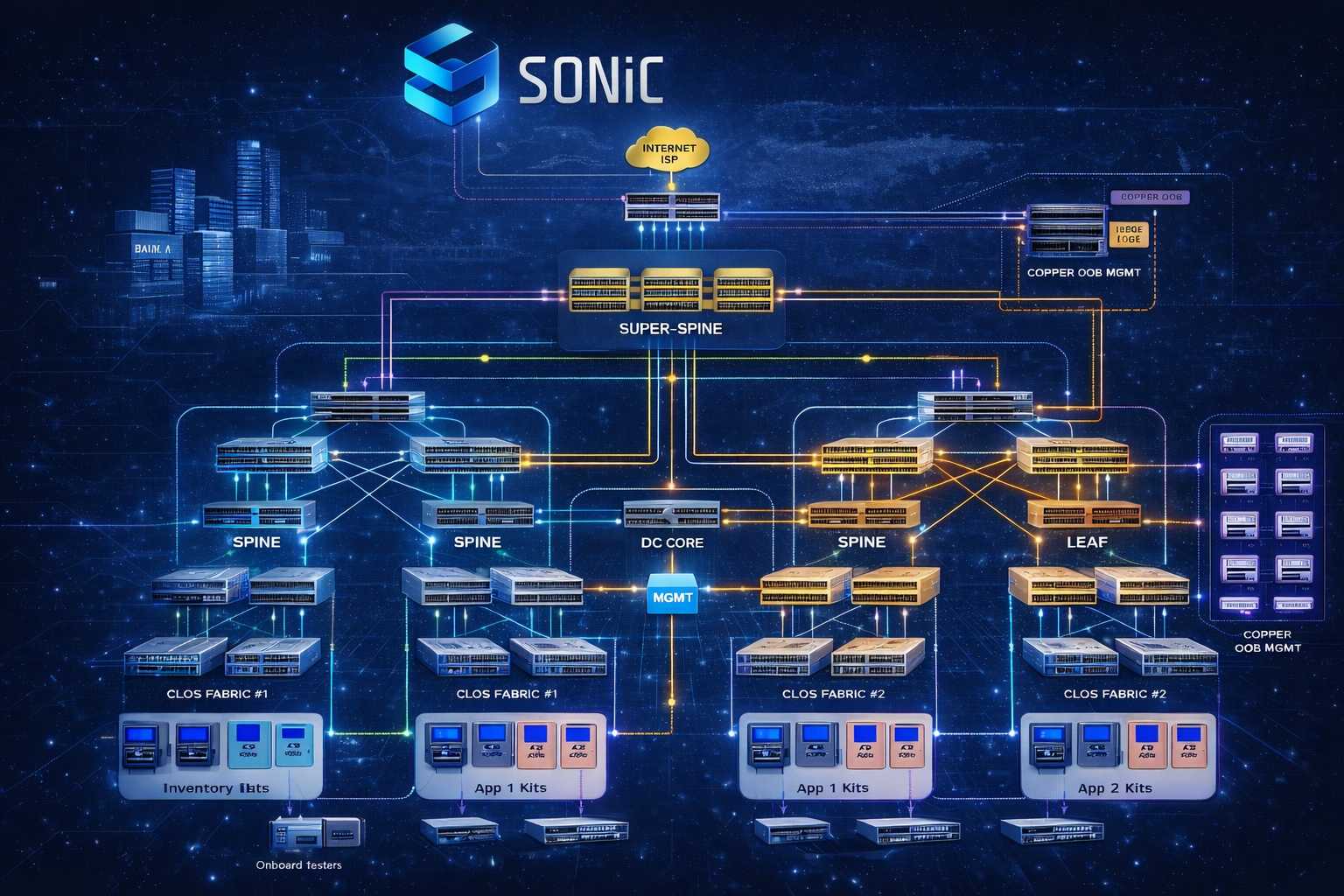

Deployment summary (example lab profile)

A phased approach that preserves business continuity while you modernise the fabric.

Topology, routing design, operational constraints, and hardware reality - documented as the baseline.

SONiC feature mapping, validation plan, and a lab fabric that mirrors your intent - before production touch.

Protocol behaviour, failure scenarios, and operational runbooks - proven in the lab first.

Controlled migration waves with rollback paths - automation-backed, change-controlled, observable.

Telemetry, upgrades, compliance posture, and operational rhythm — tuned for production reality.

PalC focuses on repeatable rollout: rack/stack discipline, BOM alignment, ZTP-led NOS installation, then intent-driven configuration and telemetry — not one-off board bring-up.

Click a component in the diagram or panel to explore details.

Components

Physical install, cabling sanity, and BOM verification so the right NOS build lands on the right switch model.

Field practicality first.

Standardised install environment so SONiC can be deployed predictably across the fleet.

Foundation for ZTP.

We do not position “custom base image builds” as the default — rollout is anchored on enterprise SONiC NOS installation via ZTP and validated images.

Production path, not science project.

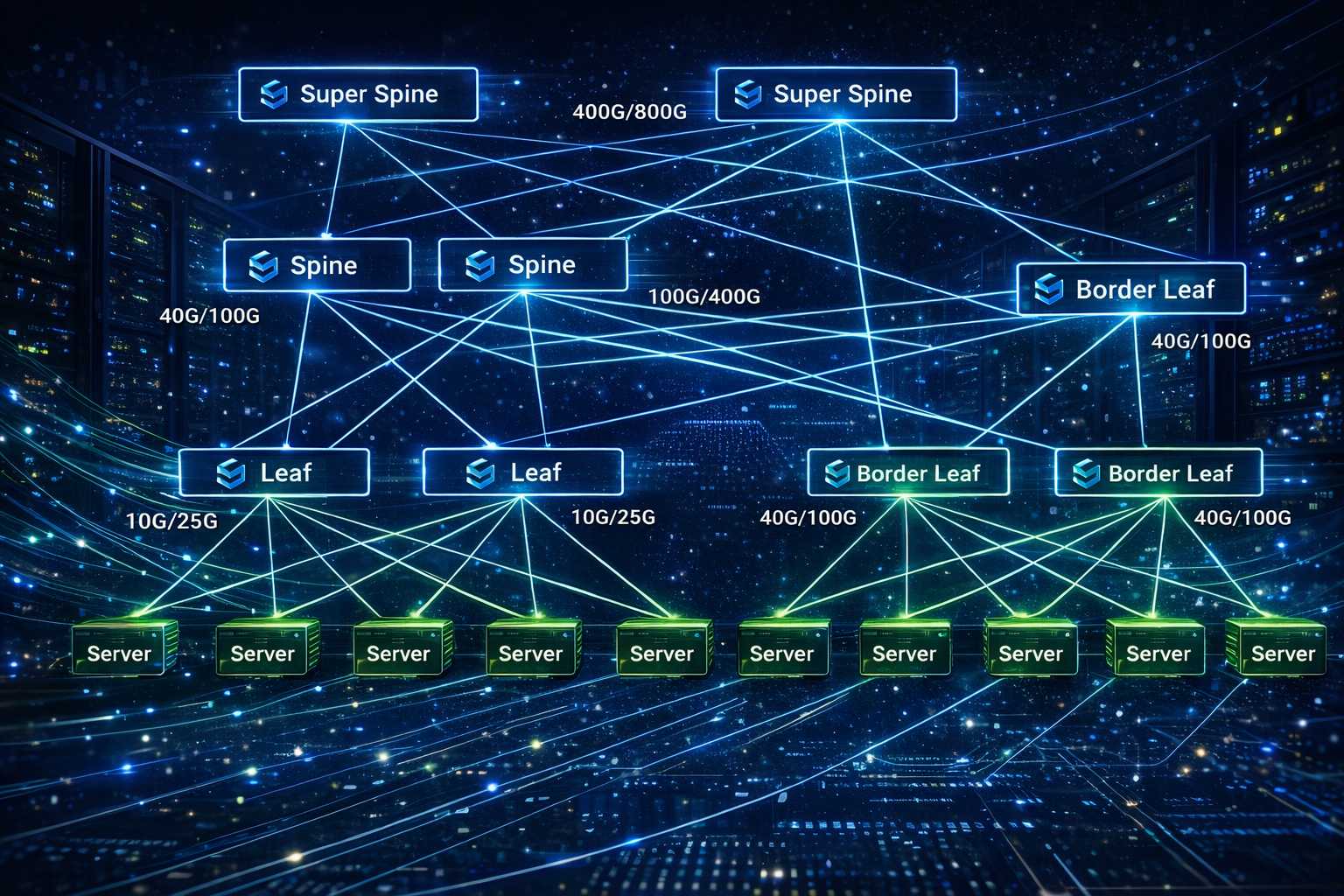

Controlled deployment patterns across spine/leaf/border roles with rollback thinking baked in.

Scale-out safely.

Intent captured as automation — Ansible/GitOps friendly — so changes are repeatable and auditable.

How teams actually operate.

Overlay/underlay services aligned to your tenancy and routing design — validated in lab first.

Matches real DC fabrics.

gNMI streaming and dashboard integration so operations teams see health early — not after an incident.

Operate with eyes open.

For complex fabrics, we combine reference SONiC configuration inputs with automation — so generated plans stay reviewable, repeatable, and safe to operationalise.

Reference config_db inputs per node role, mapped lanes/ports per hardware SKU; topology + protocol intent captured as structured inputs.

Topology-aware plan generation with human confirmation gates — producing Ansible assets and YAML artefacts for node-specific configuration.

Manual validation in the lab first; after sign-off, fleet rollout via ZTP and controlled automation pushes.

Engineering-led services across design, lab validation, rollout automation, and day-2 operations — tuned for SONiC on white-box.

Fabric design sessions, logical topology, routing/overlay choices, and a documented plan your network and automation teams can execute against.

Pre-deployment testing against a lab that mirrors your target — failure modes, scale signals, and operational workflows validated early.

Staged production migration, telemetry integration, upgrade discipline, and operational guardrails so the fabric stays governable.

Environment: 250+ multi-vendor white-box switches (mixed speeds and port densities). Enterprise SONiC installed via ZTP. Objective: automate and standardise configuration deployment across Leaf, Spine, Border Leaf, and Super-Spine tiers.

How we started: deep-dive design sessions with the customer network team, fabric architecture understanding, documented topology, and automation inputs shared early - so rollout stayed predictable across diverse hardware layers.

PalC contributes and ships alongside community ecosystems — so your SONiC deployment stays compatible with open standards, hardware choice, and modern automation practices.

Decouple hardware and NOS; retain purchasing leverage.

APIs and streaming telemetry as first-class operations tools.

gNMI-led observability for faster triage and safer changes.

Cloud-like delivery discipline for network infrastructure.

Deployments across AI fabrics, multi-cloud, automation, and security.

ODM and Other PARTNERS

Trusted by Industry Leaders

Next steps

Share your target architecture, hardware platforms, and migration goals. PalC can help design, validate, and operationalise a production-ready SONiC deployment.